|

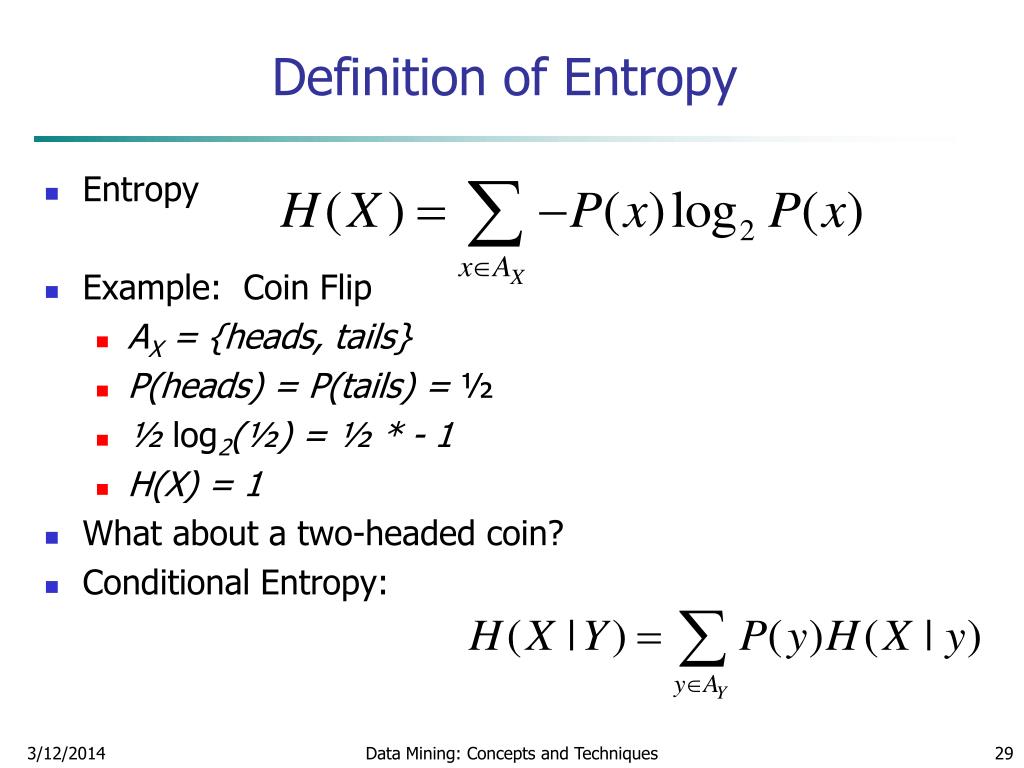

Long story short, entropy says something about the quality of energy.

This is the point at which the rules of thermodynamics used to calculate the efficiency of a cooling machine reflect the outcome of the universe. What is entropy? With this simple question we are moving into something far more fundamental, even philosophical. If someone tells you about the pressure and temperature of a cooling machine, it immediately rings a bell.īut if asked about the change in entropy of a system? Well… That’s rather abstract. Temperature, pressure and energy are familiar concepts. Part one: a simple definition Part two: birth of the Second Law Part three: the way the universe will end The most mysterious of all concepts From the cooling in a refrigerator to the formation of a thought. The latter is one of the all-time great laws of science because it tells us why anything happens at all. In this blog series we give an easy-to-grasp definition of entropy and th e second law of thermodynamics. To transform model output into a probability of class membership given $i$ potential classes, a softmax function is used.What is entropy? Part 1: A simple definitionĮver wondered why heat never flows from cold to hot? Why we cannot seem to create a machine with 100% efficiency and why we know our past but not our future? It all has to do with a concept called entropy. weights def get_gradient ( self ): return self. grad_input def get_weights ( self ): return self. sum ( grad_output, axis = 0, keepdims = True ) '''Update weights and bias via SGD''' self.

bias def backward ( self, value, grad_output ): '''The backward pass computes the contribution of this layer to the overall loss function, given by''' self.

n_outputs )) def forward ( self, value ): return value self. initialize_weights () def initialize_weights ( self ): self. n_inputs: batch size, equal to number of samples processed each iteration. Choose sufficently small values to ensure timely convergence. Parameters: - learning_rate: speed of updating weights W. Ġ.003125 0.003125 0.003125 0.003125 0.003125 0.003125 0.003125 0.003125]]Ĭlass Dense ( Layer ): def _init_ ( self, n_inputs, n_outputs, learning_rate = 0.001 ): '''A functional approximation machine to learn weights W in f(x) = XW + b such that f(x) approximates target values y as closely as possible, accoarding to some criteria. eye ( n_units ) return grad_output d_layer_d_input Nevertheless, the proper matrix math is shown here for completeness. By default, the identity function is used for activation in the forward pass, so the Jacobian is simply an n x n identity matrix.

* d layer 0 / d value At each layer only a single term in the product is computed, taking the previous computations as an input. Given k layers, the chain rule for the derivative of the whole graph is written: d loss / d value = d loss / d layer * d layer k / d layer (k-1). Takes the previous layers gradient as an argument to compute efficently. Identity function is used by default.''' return value def backward ( self, value, grad_output ): '''Compute derivative of the loss function from right to left in the computational graph, with respect to a given input (backprop). Empty by default''' pass def forward ( self, value ): '''Compute the forward pass on the computational graph. ''' def _init_ ( self, * kwargs ): '''Used to store layer variables, e.g. Class Layer : '''Basic neural network class with a forward and backward pass functions.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed